If the cars and the drones ever band together against us, we’re in trouble.

After a recent demo using GNSS spoofing confused a Tesla, a researcher from Cyber@BGU reached out about an alternative bit of car tech foolery. The Cyber@GBU team recently demonstrated an exploit against a Mobileye 630 PRO Advanced Driver Assist System (ADAS) installed on a Renault Captur, and the exploit relies on a drone with a projector faking street signs.

The Mobileye is a Level 0 system, which means it informs a human driver but does not automatically steer, brake, or accelerate the vehicle. This unfortunately limits the “wow factor” of Cyber@BGU’s exploit video—below, we can see the Mobileye incorrectly inform its driver that the speed limit has jumped from 30km/h to 90km/h (18.6 to 55.9 mph), but we don’t get to see the Renault take off like a scalded dog in the middle of a college campus. It’s still a sobering demonstration of all the ways tricky humans can mess with immature, insufficiently trained AI.

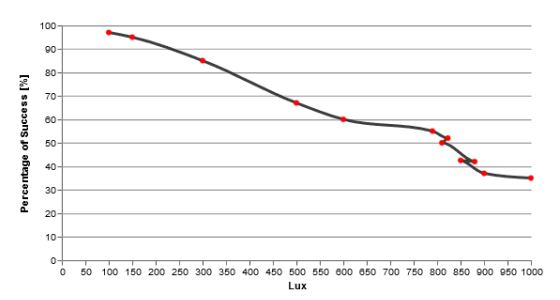

Ben Nassi, a PhD student at CBG and member of the team spoofing the ADAS, created both the video and a page succinctly laying out the security-related questions raised by this experiment. The detailed academic paper the university group prepared goes further in interesting directions than the video—for instance, the Mobileye ignored signs of the wrong shape, but the system turned out to be perfectly willing to detect signs of the wrong color and size. Even more interestingly, 100ms was enough display time to spoof the ADAS even if that’s brief enough that many humans wouldn’t spot the fake sign at all. The Cyber@BGU team also tested the influence of ambient light on false detections: it was easier to spoof the system late in the afternoon or at night, but attacks were reasonably likely to succeed even in fairly bright conditions.

Ars reached out to Mobileye for response and sat in on a conference call this morning with senior company executives. The company does not believe that this demonstration counts as “spoofing”—they limit their own definition of spoofing to inputs that a human would not be expected to recognize as an attack at all (I disagreed with that limited definition but stipulated it). We can call the attack whatever we like, but at the end of the day, the camera system accepted a “street sign” as legitimate that no human driver ever would. This was the impasse the call could not get beyond. The company insisted that there was no exploit here, no vulnerability, no flaw, and nothing of interest. The system saw an image of a street sign—good enough, accept it and move on.

To be completely fair to Mobileye, again, this is just a level 0 ADAS. There’s very little potential here for real harm given that the vehicle is not meant to operate autonomously. However, the company doubled down and insisted that this level of image recognition would also be sufficient in semi-autonomous vehicles, relying only on other conflicting inputs (such as GPS) to mitigate the effects of bad data injected visually by an attacker. Cross-correlating input from multiple sensor suites to detect anomalies is good defense in depth, but even defense in depth may not work if several of the layers are tissue-thin.

This isn’t the first time we’ve covered the idea of spoofing street signs to confuse autonomous vehicles. Notably, a project in 2017 played with using stickers in an almost-steganographic way: alterations that appeared to be innocent weathering or graffiti to humans could alter the meaning of the signs entirely to AIs, which may interpret shape, color, and meaning differently than humans do.

However, there are a few new factors in BGU’s experiment that make it interesting. No physical alteration of the scenery is required; this means no chain of physical evidence, and no human needs to be on the scene. It also means setup and teardown time amounts to “how fast does your drone fly?” which may even make targeted attacks possible—a drone might acquire and shadow a target car, then wait for an optimal time to spoof a sign in a place and at an angle most likely to affect the target with minimal “collateral damage” in the form of other nearby cars also reading the fake sign. Finally, the drone can operate as a multi-pronged platform—although BGU’s experiment involved a visual projector only, a more advanced attacker might combine GNSS spoofing and perhaps even active radar countermeasures in a very serious bid at confusing its target.

Source: Ars Technica